Can Big Data Launch Cancer Research?

Guest Post by William G. Nelson, MD, PhD

Editor-in-Chief, Cancer Today

In his 2016 State of the Union address, President Barack Obama announced he would put Vice President Joe Biden in charge of “mission control” for a new “moonshot” initiative that will “make America the country that cures cancer once and for all.”

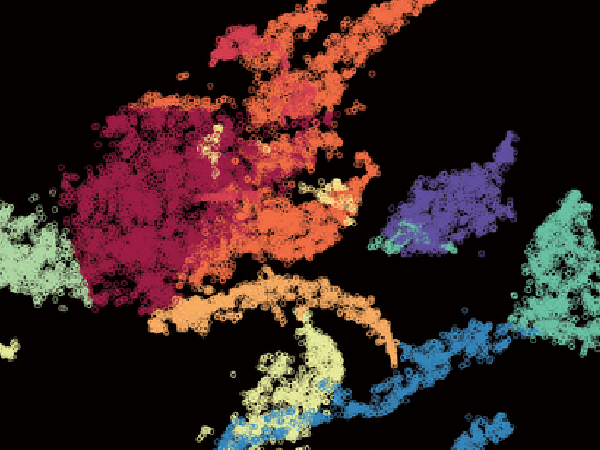

One key priority in the vice president’s cancer plan is the collection, sharing, and analysis of big data. Advances in information science and technology have created an astonishing capacity to gather, transfer, store, and analyze large and diverse collections of data—measured in petabytes (1015 bytes). Is cancer research ready for big data?

Big data was envisioned initially for e-commerce to explain trends and inform management decisions, but now it influences thinking in fields as diverse as astronomy, geology, and biomedicine. Large-scale analyses of big data in cancer medicine promise to yield insights into disease and treatment that could transform care.

For this promise to be realized, however, the electronic medical record (EMR) needs to be reimagined. Traditional EMR software was created for health care billing. Newer EMR tools provide medical decision support and offer prompts for laboratory and radiologic testing to improve outcomes while controlling costs. To exploit big-data analytics, the EMR must evolve to provide a quantitative medical portrait of an individual. For cancer, this would include genomic data, imaging data, and patient-reported symptom data, in addition to the conventional narrative history and physical examination results. (The American Association for Cancer Research, publisher of Cancer Today, is leading AACR Project Genie, an international data-sharing project that aggregates and links cancer genomic data with clinical outcomes from tens of thousands of cancer patients treated at multiple international institutions.)

Moving forward, all of the information deposited in the EMR should reflect the best measurement science principles, with special attention to the accuracy, reproducibility, and consistency of data. Verbal descriptions of skin lesions might be replaced by photography. Descriptions of heart murmurs heard through a stethoscope might be replaced by recordings of the actual sounds. A test for a cancer biomarker in a biopsy, scored on a numbered scale, might be replaced by a scanned image of the stained slide. Once data collection and storage have been optimized for patient care, new approaches to analyzing big data can be unleashed, creating a “learning health care system” that incorporates innovation in real time and assesses its impact seamlessly.

However, safeguards introduced to protect patients participating in biomedical research and to secure the privacy of health information may be significant barriers to accessing big data. In a 2014 piece in the New England Journal of Medicine, Ruth Faden, Tom Beauchamp, and Nancy Kass argue that biomedical research ethics can be modernized to address these concerns. Their Common Purpose Framework for ethics aims to respect the rights and dignity of patients, incorporate physician judgment, deliver optimal care, avoid undue risks and burdens, reduce inequities, and foster outcome improvement.

The guidance computer used on the Apollo spacecraft sent to the moon had 4,096 bytes of random-access memory and 73,728 bytes of core memory. If EMRs can be upgraded and a new ethical framework governing access to health care data can be created, we will see if computers able to manage petabytes of information can deliver on the promise of big data for a cancer moonshot.

William G. Nelson, MD, PhD, is the editor-in-chief of Cancer Today, the quarterly magazine for cancer patients, survivors, and caregivers published by the American Association for Cancer Research. Dr. Nelson is the Marion I. Knott professor of oncology and director of the Sidney Kimmel Comprehensive Cancer Center at Johns Hopkins in Baltimore. You can read his complete column in the summer 2016 issue of Cancer Today.